How to feel good about AI

Finding the happy medium in the drama and reality of AI.

I’ve had a lot of questions about AI recently - how can I use it in my business? Is it going to take all the jobs? Are you worried about it? How do you use it?

The short answer to these questions is depends, no, not really, sparingly1.

But here is the long answer.

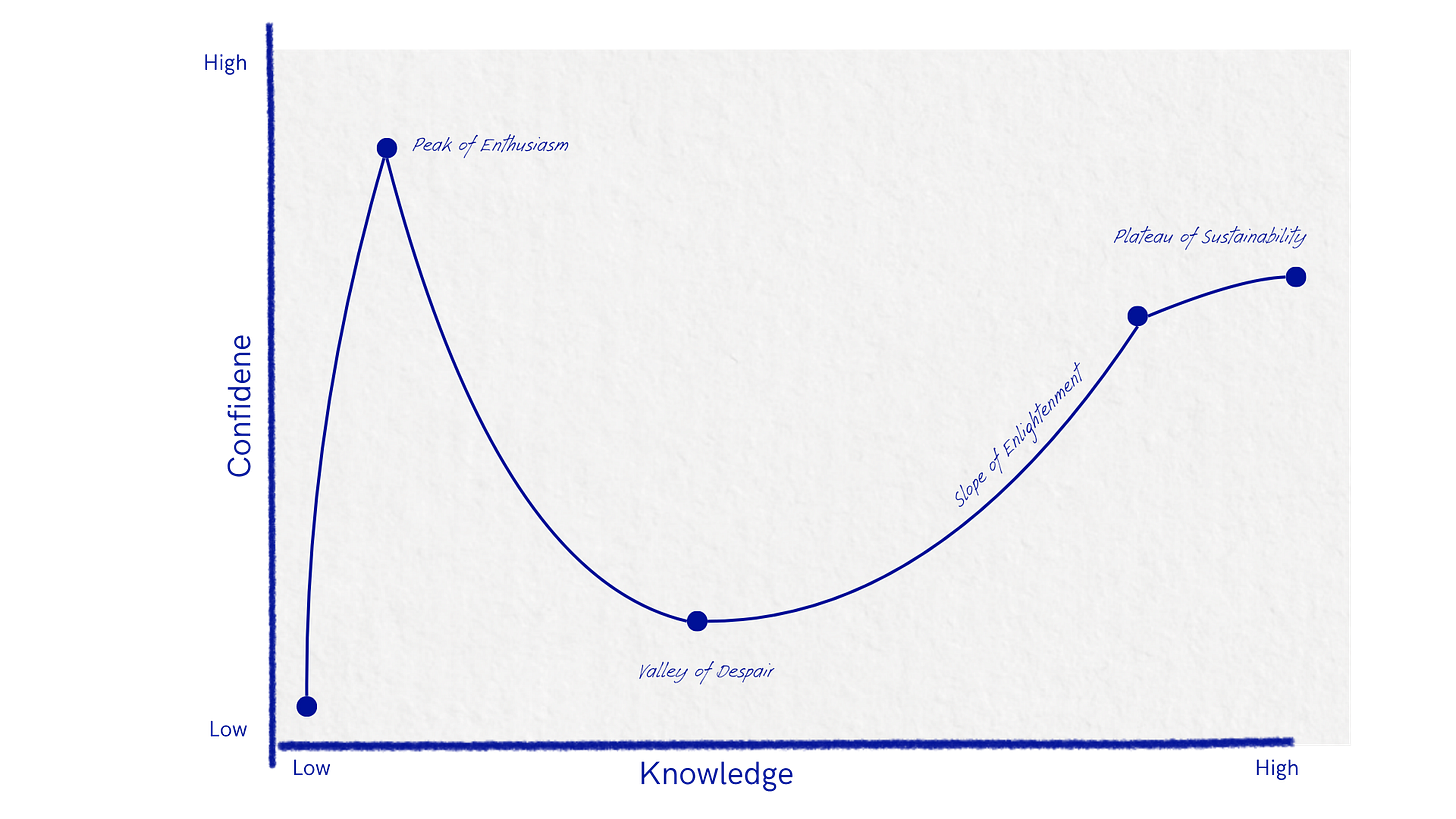

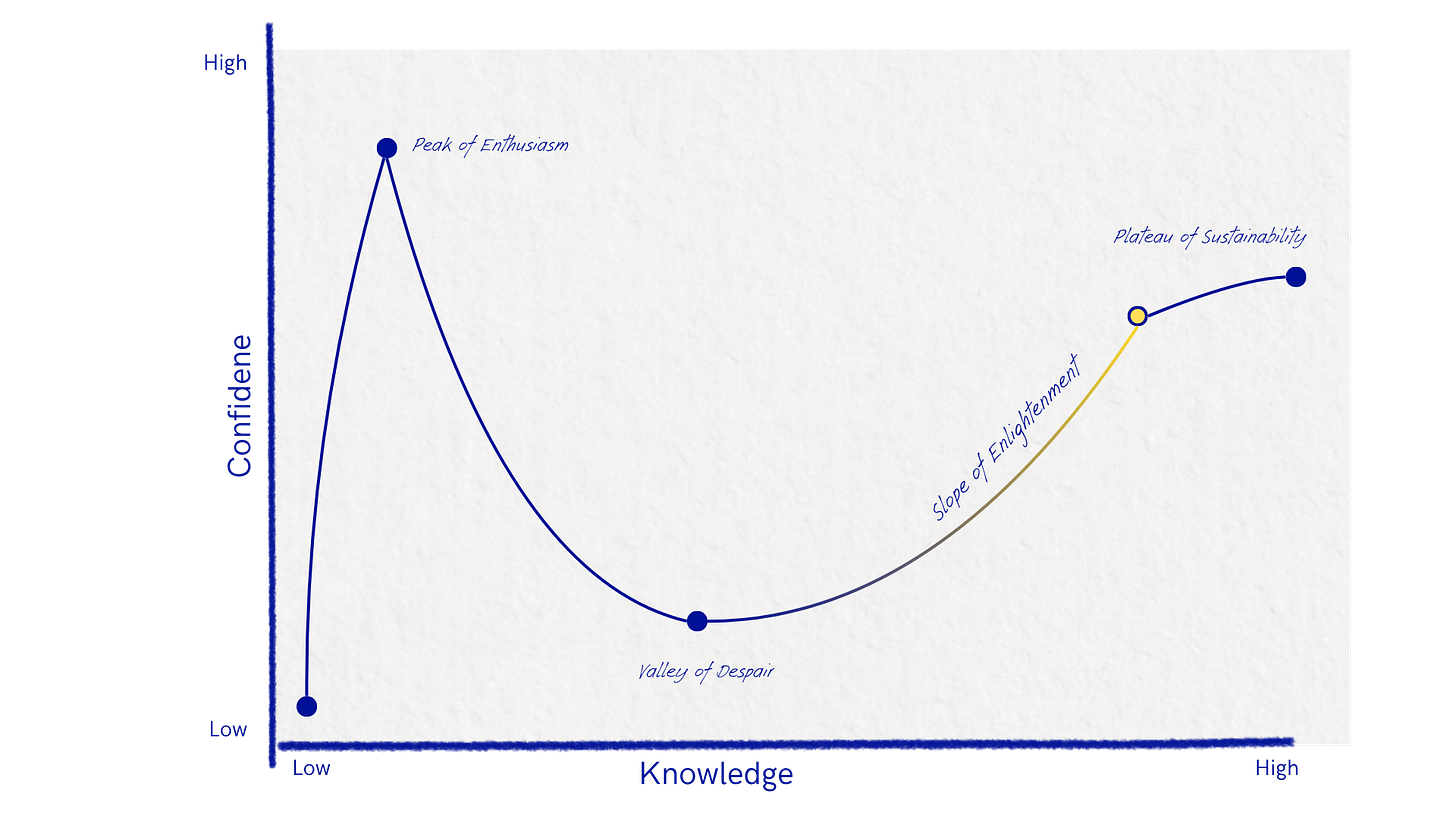

One of my favourite models of human behaviour is the Dunning-Kruger effect2. You may have heard of it (and I’ve mentioned it previously here). But it describes the relationship between knowledge and confidence as you embark on something new.

What we are witnessing is the entire world going through the Dunning Kruger curve at the same time.

Some are at the Peak of Enthusiasm, others rock bottom at the Valley of Despair and a couple of interesting voices peaking out from the Slope of Enlightenment. The result of this variable rollercoaster is a bunch of noise - opinions screeching out from every turn but no clarity about who is where.

So let’s jump on the rollercoaster and work out where you might be.

Before we get started - I’m using “AI” as a generic catch-all term but this article is really great at breaking down what is a term that describes a technology and what is just marketing speak.

The Peak of Enthusiasm

Let’s start at the Peak of Enthusiasm3 because you have to start there.

This is the breathless evangelism of people excited by the opportunity and potential of a new technology.

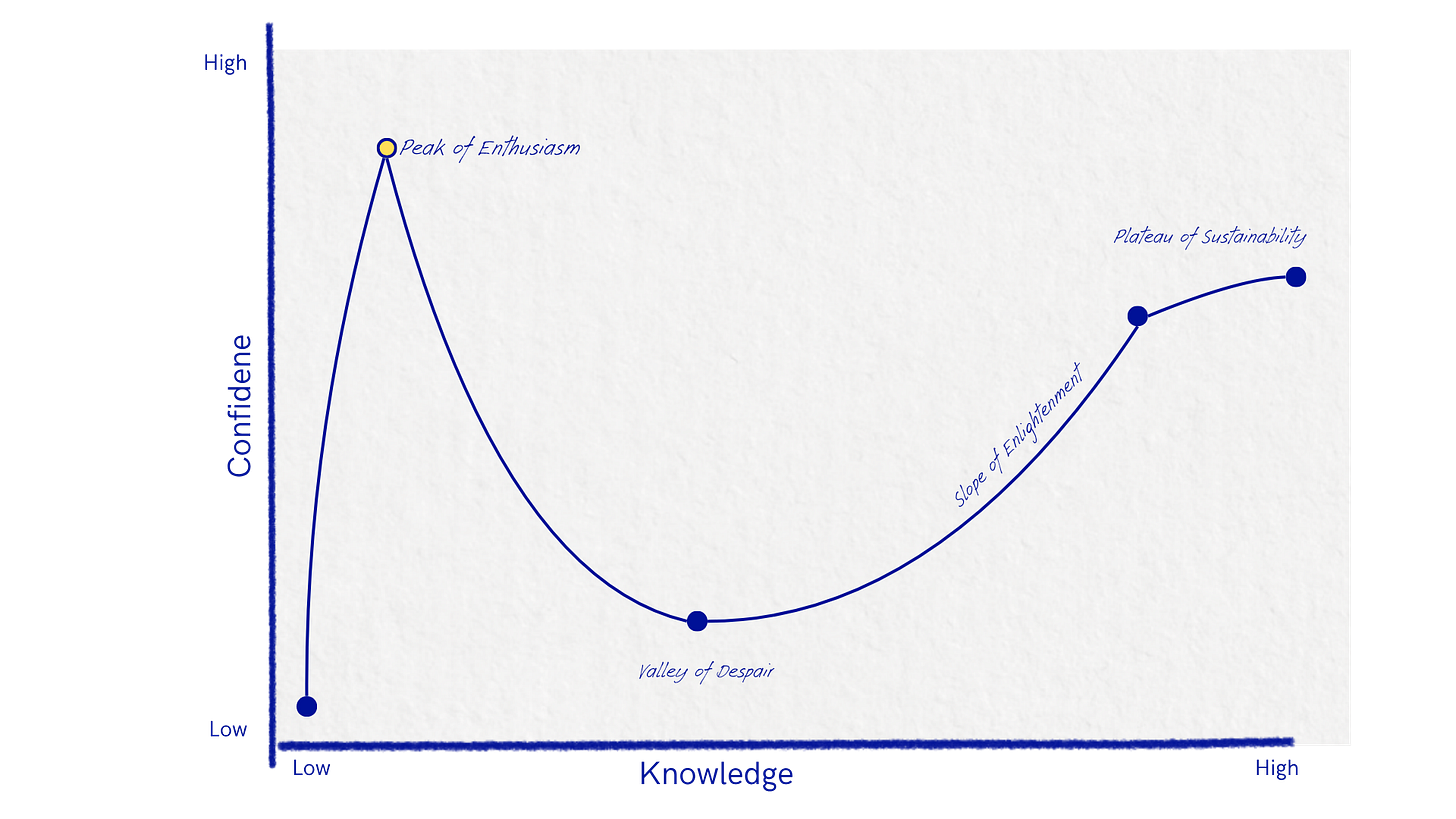

You’ll hear the loudest voices from those who are actively seeking investment in this area - which should already cause you to be a little suspicious.

This tweet from Sam Altman is so manipulative, its the perfect example of what I’m talking about. Pretending that he is ‘off script’ admitting that even he might be made redundant by his own technology. Eye roll.

A business like OpenAI, which loses $7.77 for every dollar of revenue growth, is one that is running on hot air, doing everything it can to convince others to invest in their vision of the future where their company is the one you need for everything. These voices have a vested interest in making you feel like you are missing out, running late and the generalised anxiety they generate feeds into their proposition that the ship is going down and you better jump on the lifeboat with them (even though you’re still standing on the beach).

You can and should ignore these voices and I’ll come back to this a little later on. They are shifty sales people and they are selling something, so take it all with a healthy heaping of salt4.

On the positive side though, when you first start using something like Claude or ChatGPT it is quite amazing. You’re thinking ‘wow this thing can spit out in seconds what used to take me days’. You play around with it, you generate an image of your dog as a person or yourself on a movie poster. It’s fun, it’s free and it’s a novelty.

There is also something to be said for accessibility - how effectively it lowers the barrier to entry for so many people. Now you can have an half decent marketing strategy spun up in a couple of seconds. Or an email you would have obsessed over for half a day is now drafted and sitting in your inbox ready to send. I used it to fix the code on a banner on my website - I don’t know how to code so it was fun to be able to solve something like this for myself.

This is where the fun stuff, the obvious stuff, the quick and easy plug-and-play stuff lives. It’s not all bad or lazy, some of it is amazing and game-changing, particularly for people who struggle with the corporate wrapping that has to accompany ideas. It can search for you, summarise things, generate your grocery list, translate or workshop ideas with you - an almost infinite amount of really great, surface level use cases.

But what happens when we push past the obvious stuff and really get stuck in to integrating it into our business.

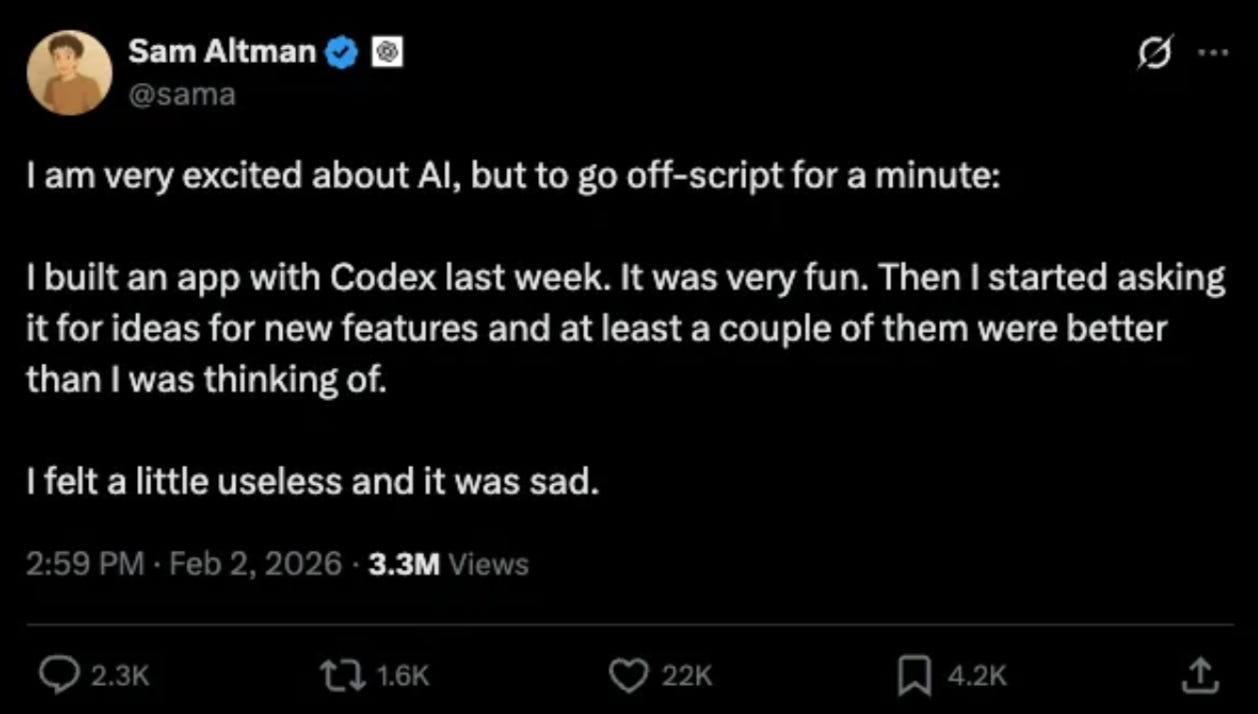

The Valley of Despair

This is where reality sets in. Now it’s important at this point to recognise two things. First is that this Dunning Kruger trajectory is not unique to AI, it is consistent with learning or doing anything new (whether its new to you or new in general).

The second is to observe where we are in terms of confidence and knowledge.

Our knowledge is increasing - we now know more than where we started - but our confidence has plummeted because we have now revealed how little we know in relation to the sum total of all knowledge on the topic. While it feels a little shitty, we are now no longer completely ignorant. We are out of the blue-sky exuberance of possibility and deep in the nitty gritty mud of reality.

But reality is really the only place that matters. So let’s stay here for a little while and get to know our surroundings as they actually exist.

From an AI perspective this is where we learn how much it does or doesn’t actually help us.

When these things first came out, I used them tons, I asked ChatGPT & Claude to plan my life, write my website, edit my substack. I don’t use it for any of these things any more. Not because it can’t but because the output felt really generic.

While it might expedite the process, I noticed one thing - it still takes time and effort to prompt, adjust and implement. What I found was that the output was not worth the time, proportionally. I.e if I just sat down and wrote my website, it wouldn’t be quicker but it would turn out better.

Now you might just think I’m terrible at prompting or obnoxious about my own copywriting skills and while that could be the case, it’s not just me.

Studies are showing that the time saving we are perceiving is not actually translating to reality.

“When developers are allowed to use AI tools, they take 19% longer to complete issues—a significant slowdown that goes against developer beliefs and expert forecasts.

This gap between perception and reality is striking: developers expected AI to speed them up by 24%, and even after experiencing the slowdown, they still believed AI had sped them up by 20%.”

In this study, developers thought they were saving time with AI but it was actually taking them longer to complete the task. The interesting thing is that even though it was measurably slowing them down they still felt like it wasn’t.

Perception and reality can differ, the only way to tell if something will do what you think it will is to test it out. To save you a little time, these are a few big limitations I’ve observed.

Blend to Murky Brown

By definition, AI can only generate based on what has come before. This is helpful if you’re looking for recipes or research but unhelpful if you are trying to do something brand new.

If you have ever painted something, you know that if you blend all the colours together, you get a murky grey-ish brown. This is great if your murky brown employer has requested some new murky brown products, not great if you are trying to establish a unique and bright palette. Murky brown has a way of toning down all the other colours too.

I’ve seen it described as less like asking one smart person and more like asking 1000 idiots. Together they can come up with something, but it’s rarely going to be amazing. Adam Mastroianni describes it as an infinite midwit - sufficiently booksmart but lacking insight.

The Mystery Box

I recently read an article where someone was so excited to have sequenced their genome with AI. They took an incomplete test result from 23 & me and asked Claude to extrapolate the data. They were thrilled with their newly found ability to sequence genomes. Except they were not a genetic scientist so had absolutely no idea whether the information they had was correct. Never mind what they intended to do with this information.

Inaccurate information read by people with no expertise is not just meaningless, it can be dangerous. Decisions without owners, apps that ‘look great’ but it’s unclear how they function - all the result of the AI mystery box. Inputs go in, something happens, outputs come out.

This can result in things that work well when everything goes to plan, but break the minute something changes - and now no one knows how to fix it. It’s what our dramatic tech bros are hoping will happen - a mystery box that you pay for that does work you don’t really understand but now rely on.

Risk management 101 is about reducing the opportunity for single failure points - a system that holds all the information and crashes, or a person that knows how everything works who leaves - these can already bring a company to it’s knees so why would you hand over that much power to a tool you do not own.

Many vibe coders are realising their products don’t hold up under pressure. Amazon took 16 hours to rectify a AWS crash after replacing developers with AI. Don’t give your keys to the mystery box.

Side note: The value of software as scaffolding.

Some people are saying that AI is making software obsolete (and many software companies are taking that opportunity to justify a bunch of layoffs).

I don’t really agree, I think that software gives you the opportunity to make things faster and easier while avoiding the mystery box problem. It also provides the scaffolding for AI if that is useful in the future.

For example a project management software helps you visualise your current and upcoming work, the inbuilt AI tool might help you forecast resourcing requirements based on past performance. Plus trust me, you don’t really want to vibe code your own app, let the experts do the hard work.

Don’t write off software, sometimes it is the right tool for the job. 5

Doing useless stuff faster

If it doesn’t matter, don’t automate it, delete it.

When we automate something, we want it to improve our efficiency, but often we are not actually improving efficiency, we are simply outsourcing a tedious task elsewhere.

Now I’m not in the camp of ‘we should do everything the hard way to maintain some lofty sense of purpose’. It you can get it done faster and better, definitely do that - but make sure it is better and make sure it’s worth doing in the first place.

If something contributes no value, no one and nothing should be doing it. AI doesn’t cost $0 and the cost isn’t only financial. It’s bad for the environment, it’s a huge load on data centres and consumes fresh water. Even if we aren’t paying for it, it does cost something.

Furthermore, AI is not incentivised to be efficient. The more tokens you use the more money it makes. It’s still your responsibility to be efficient.

An over reliance on written information

AI is really great at consuming what is documented but in my career as someone who fixes problems my goal is to work out what really happens. Generally, this means ignoring everything that has been written down and simply observing what actually goes on.

It’s not to say that people lie, but what people say, and what they do is often different.

If you have ever done a job interview, you know what it’s like to get a vibe of someone within the first minute. Sure you have seen their resume but now you actually know what they are like. This is a subconscious, instantaneous process that is completely offline - body language, outfit, micro expressions, scent, energy - all register in milliseconds but you now know more about that person than if you studied their LinkedIn all day (a chilling thought).

Written, documented information is such a small proportion of life (and business) that AI tools can only provide incomplete answers. I think this is why so much of it’s advice or output can feel so hollow.

“I am angry” is nothing compared to the full body experience of being furious. Advice from a person that has no experience with something you’re struggling with can be aggravating, let alone from a machine that has never felt guilty or nervous or proud.

A human body helps you understand more than you know. I like this quote from David William Silva;

“A child learns physics by dropping a spoon from a high chair a thousand times; an LLM learns “physics” by reading sentences about gravity.”

You have more context than the machine, so back yourself.6

AI can’t execute

This is the most important one. It’s always been true that ideas are a dime a dozen. It’s very easy to come up with a great idea, it is significantly harder to execute it. The skill of execution is more than just coming up with a good plan (AI can help you there), that is table stakes. Great executers know how to get other people on board, mobilise a team, be comfortable moving themselves and others through ambiguity. It’s about being able to recognise risk, negotiate deals and build relationships.

These all require soft skills. If you don’t believe me, take a look at our mate Sam Altman - he still needs you to use his technology. The techniques to get you on board are all distinctly human. ChatGPT isn’t talking at conferences, doing press or meeting with investors (can you imagine), the human guy is.

AI is emailing you, flooding your feeds and auto replying to your comments - all avenues we are increasingly tuning out of. It’s not out of some sense of AI slop snobbery, but the same instinct that you employ in the job interview - the millisecond quick subconscious perception that there is no substance to the content.

Now not everything needs substance to be valuable - I’m more than happy to talk to a chatbot if it can resolve my issue quickly and easily. I don’t need an empathetic response, I just need my electricity connected.

But many things still do require a human touch, so don’t waste your time trying to outsource those things.

—

The Valley of Despair sounds dramatic but it’s my favourite phase because it’s so truthful. You are doing the hard work of testing and learning. The key is not to turn the Valley of Despair into the Valley of Sunk Cost. Don’t stay here digging deeper and deeper, test your hypothesis, get an answer, move on.

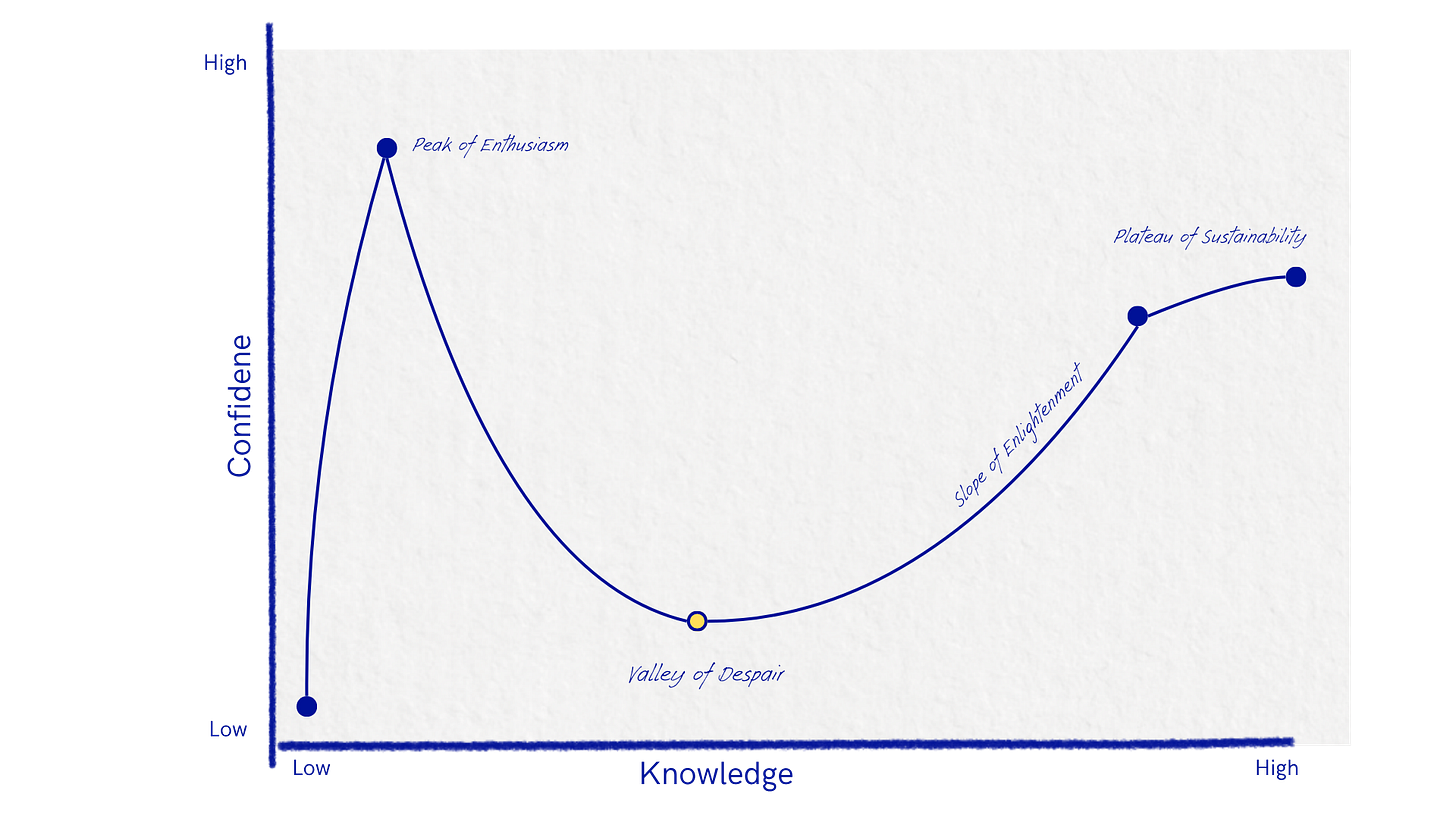

The Slope of Enlightenment

Okay so you’ve grappled with reality and you’ve found use cases that actually save you time, money or give you a genuinely better outcome. Welcome to the slope of enlightenment.

Here are some examples of AI use cases that live here:

WAVE music video - Using AI as a modality to create an unique effect.

Breast Cancer Screening - Identifying risk factors earlier than human eyes can.

Detecting Illegal Fishing Activity - Great example of a problem that would otherwise be impossible to solve at scale

What do these have in common? Extremely niche, wielded by experts and most importantly, they solve a problem.

And here is where we arrive at the crux of what I believe about AI. It is a tool. A super flexible, very interesting, new tool but a tool nonetheless.

If you are building a house, you don’t stand around on the worksite looking for opportunities to use your hammer. You use the best tool for the given job.

AI is exactly the same. Know enough about it so that you know what it’s good for, but don’t waste time cramming it into situations that don’t need it.

The antidote to getting overwhelmed on the Dunning Kruger rollercoaster is by becoming deeply obsessed with what you can understand fully - the problem you are trying to solve.

Falling in love with your problem, investigating all it’s edges and gaining clarity is what allows a solution to surface. It gives you a shape to work with, a clear direction to head in so you can recognise the right solution for your specific problem when it comes along.

It means you can be specific and selective about what is adding value and what is just noise.

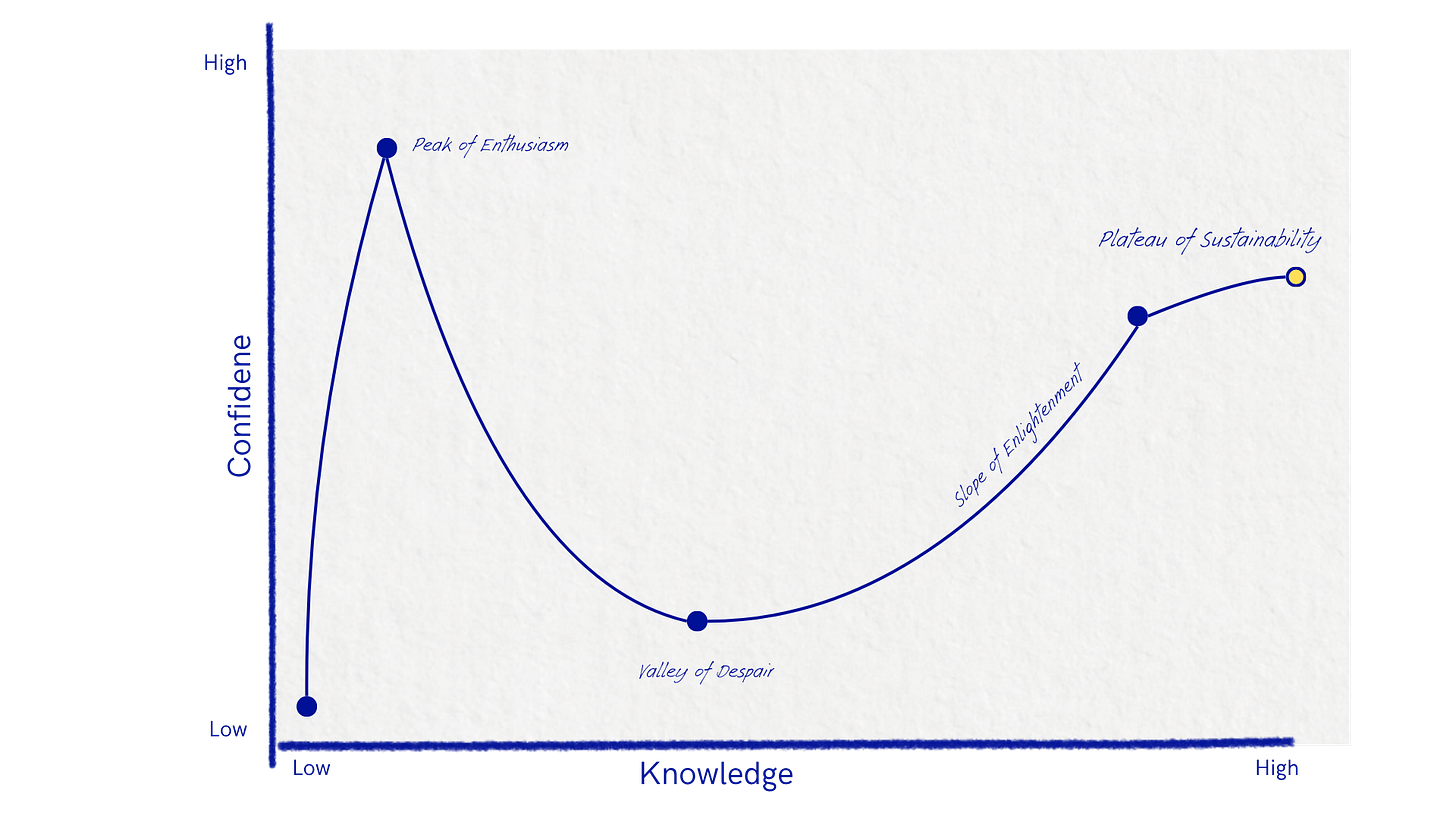

The Plateau of Sustainability

This is where it all comes to rest. Where the use case fits neatly in your business. Like swapping out the typewriter for the computer, the landline for the mobile, there comes a time where technology finds a natural resting place in society.

My prediction is that AI will find it’s natural resting place somewhere in the middle - It won’t be in the breathless, dramatic machinations of a company trying to convince you that your fresh air and water are just collateral damage for some delirious tech bro future. But it won’t go away either. It’s a great tool in the right context, so use it as far as it’s useful and don’t stress about the rest.

I’ve seen it described as waves and tides7. The waves are the headlines, the posts and dramatic daily bombardment of information about AI. The tide is the deeper shift, the changes that will last longer and go deeper. Don’t spend too much headspace bobbing around in the waves, navigate according the the tides.

Make change on your own terms.

Panic is rarely a catalyst for a good decision. Back to our waves and tides analogy, you want your business to be a boat with sufficient ballast to keep you steady, upright and on track. You don’t want to be weighed down but unnecessary baggage but you don’t want to be so light you’re flung around in every storm.

How do we create that happy medium (*wink) in your business?

Know your Value

Understand specifically what it is that people would like to pay you for. It might be the service you offer, it might also be the supportive way you guide people through your process. What are the specific elements that are important and valuable?

If you’re creative, your value likely comes from your taste and ability to translate abstract thoughts into reality. The nature of inspiration is more than just inputs and outputs - see Imagination is the original prompt by Tasty Magazine by Nimisha Inc.

Understand the problem you want to solve

When I work with companies to help them implement a software, I might have a general idea of what might work for them, based on all the software I’ve worked with, but I never lead with a solution.

I always seek to understand the problem completely. This helps me stay software agnostic and often there is a lot we can solve before we even get to the software selection stage. Do we have good processes, is our client communication clear, do we have the right skillset in the team? These things will all have a significantly larger impact than any software we can implement.

Knowing the problem and having a clear vision of where you want to get to, means you can fill in the ‘how to get there’ with the best solution - whether that’s AI or something else.

For more on Problem Solving…

Stay curious but don’t get distracted

Take the time to learn new things - have a tinker but don’t lock yourself in a room studying how to use Claude Skills if it won’t solve the problem you actually have.

Don’t let the naive excitement of people still at the peak of enthusiasm convince you that you’re missing out.

Lean into your strengths

The worst case scenario is implementing something that solves part of a problem but wipes out all of your strengths with it. Part of understanding a problem is also identifying what is already working well.

If you’re not sure ask your customers what they love about working with you (and please don’t use AI to draft the email).

Be efficient to protect your value

Staying lean and efficient on your own terms is the best way to protect your business. At the risk of introducing yet another water based analogy, check out this article on maintaining the water level - meaning the amount you have to make to stay afloat.

Being good at focusing on your value and cutting any waste that distracts is a good skill to have, no matter the tool you use to get rid of that waste.

When you control your efficiency on your own terms you recover the headspace needed to see new opportunities.

So these are my current thoughts on AI. This topic has been bouncing around in my head for a while so I’d love to hear what you think! You can leave a comment, DM me or shoot me an email at hello@happymedium.au.

I also read a lot of really great articles to research this article so I’ve compiled them all here.

If you enjoyed this please leave a like to signal my value to the algorithm 🙏 and if you aren’t already subscribed, you can do that below. I write weekly(ish) about business, creativity and psychology.

If you would like to know more about Happy Medium, you can check out my website here. If you want some human help navigating a problem in your business or just making sense of what’s next, you can book an intro call here.

Thank you!

If you’re curious, I used AI for two things in this article. 1) I asked Claude to help me with a title. I’ll let you guess if I used it’s suggestion or not. 2) I asked for more examples of our ‘Slope of Enlightenment’ use cases. I already had the WAVE video and the Breast Cancer Screening but it gave me the illegal fishing one.

To my fellow psychology nerds, please don’t come for my very liberal interpretation of the Dunning Kruger effect.

Some diagrams refer to this as Mt. Stupid which is funny but feels a little harsh.

I wanted to keep this article largely positive but I can’t really talk about AI without pointing out that fundamentally exploitative nature of these businesses;

They are bad for the environment. From Australia’s Chief Scientist, Dr Catherine Foley;

For example, in West Des Moines, Iowa, a giant AI hyperscale data centre was built to serve OpenAI’s most advanced model, GPT-4. During peak times in summer, the custom-built facility of over 10,000 GPUs draws about 6% of all the water used in the district, which also supplies drinking water to the city’s residents

They are exploitative in nature - The whole business relies on the extraction of value without permission or compensation. A technology that relies on extracting value and giving nothing back (financially or culturally) drains the ecosystem it exists in. It’s the same pattern as what Google & Apple did to the news media; using their work for free, convincing publications it’s good for them and the result is a scraggly and unfunded press, increasingly beholden to their financial landlords.

You are training AI for free - I can’t believe more people aren’t talking about this but every time you interact with AI you are training it for free (you might even be paying them to let you do it). Where your data goes and what it is used for is important - your IP is valuable and honestly you deserve to be compensated. Check out You Are Training AI: Who Gets Paid for It? for a really great deep dive on this.

Having said that software companies have been enshittify-ing themselves for some time now. One reason for this is the pursuit of the enterprise market, making easy money as software gets crusted on and the user is no longer the actual user but the control requirements of the IT department (I have an ongoing beef about this). It’s the reason Atlassian sponsors an F1 car.

Anyway, not all software is bad and when it is good it will make you faster and better, if you need help working out how to do this check out Automate your Admin.

This bias towards written information isn’t just an AI thing. The greatest loss of ancient information was not in the fire of Alexandria but about 500 hundred years later when the papyrus was degrading and had to be transferred to the significantly more expensive leather manuscripts, of which only few could be produced. The monks doing the transcribing had to make a decision on what to copy over resulting in an unintentional bias and the loss of most pagan classical latin texts. For more on this watch this interview with Ada Palmer (or just this bit if you don’t have 2 hours spare - but highly recommend watching the whole thing).

Likewise cultures that have non-written ways of storing knowledge have often been overlooked or underestimated. Our physical presence in the world, learning by engaging all our senses and tactile feedback all embody knowledge more effectively than simply reading and writing.

And yes I acknowledge the irony that you are reading this right now and I still feel the need to link to an article when you all know exactly what it feels like to remember the route home or smell that the coffee is starting to burn.

Holly Garber sent me an newsletter by StraightUp Bookkeeping recapping a speech by Micheal McQueen at the Xero Supercharge Conference. Must give credit where it’s due even if it’s longwinded 😅

Incredible read!

Wow, this is so well considered and incredibly insightful! As someone who runs a creative studio, I am faced with the broken promise and limitations of AI on a daily basis that this thought piece captures beautifully.